In May, two generations of trillion-parameter models, 1T and 1.5T, were successively released, marking the official entry of the four major AI giants into the decisive phase of the AGI competition.

Just moments ago, Musk erupted once again.

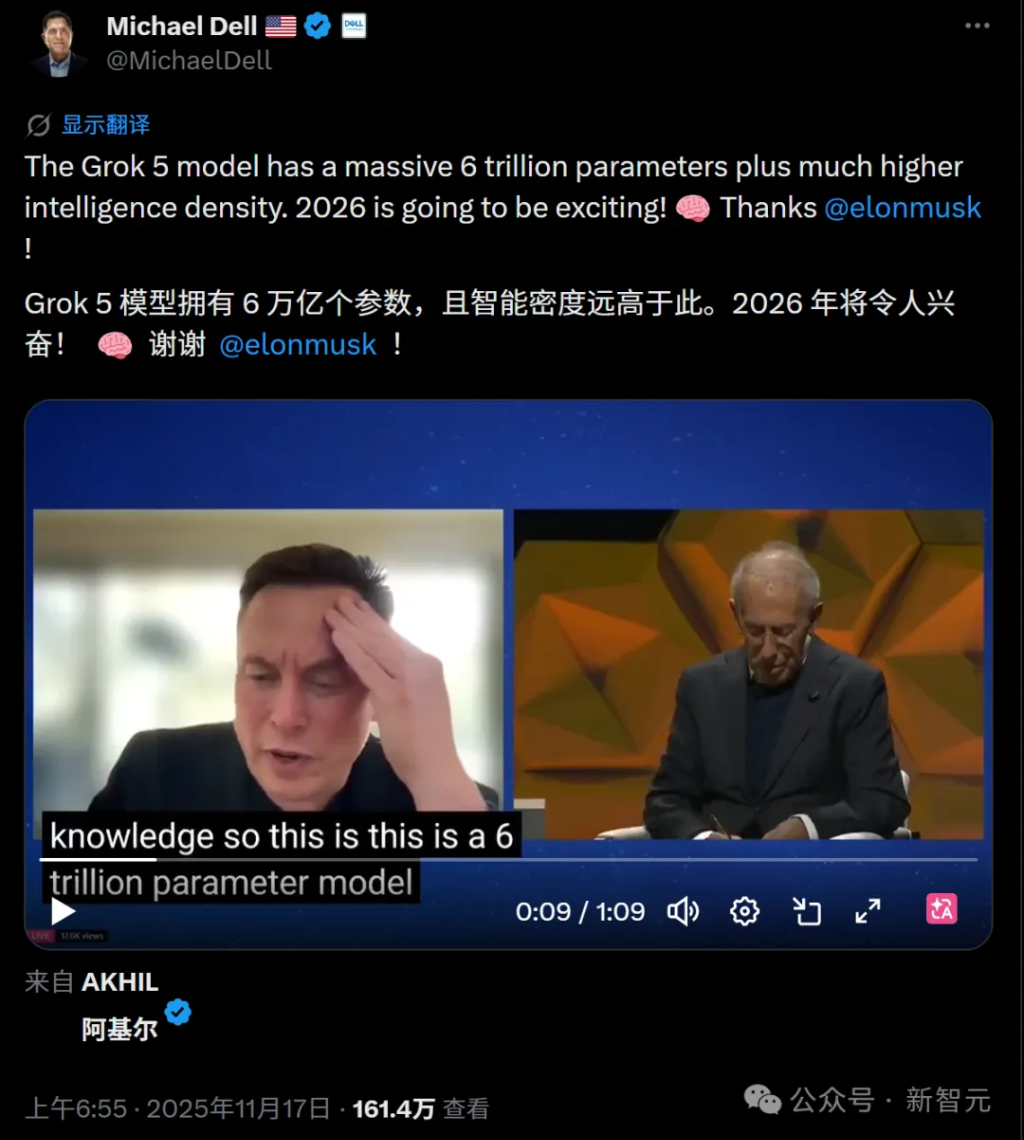

This man, known as the most disruptive figure on Earth, made a bold statement on the X platform – Grok 5 is AGI!

No embellishment, no 'probably,' 'perhaps,' or 'possibly.'

No embellishment, no 'probably,' 'perhaps,' or 'possibly.'

It was straightforward and unequivocal.

This level of audacity, or 'confidence,' is unparalleled.

Even more explosive, he simultaneously released a roadmap that left the entire industry gasping: launching Grok 4.4 with 1 trillion parameters in early May, followed by Grok 4.5 with 1.5 trillion parameters by the end of May.

Two generations of trillion-parameter behemoths within a single month!

The ultimate goal, Grok 5, is undergoing intensive training on the Colossus 2 supercomputing cluster at a scale of 6 trillion parameters.

This is nothing short of a revolution.

Grok 4.3 has just been released, and Grok 4.4 is already on the horizon.

Let us first review the timeline.

On April 17, xAI quietly launched Grok 4.3 Beta.

Note that there was no official blog post, no technical white paper, and no third-party benchmarking.

This approach is very much in line with SpaceX’s style.

However, on April 18, Musk clarified that the currently launched Grok 4.3 Beta is only an early test version, and the full version with 1 trillion parameters is still under training.

Now, the version with 1 trillion parameters may have completed training.

Here is a closer look at this aggressive timeline:

Grok 4.3 (released in mid-April) → The 0.5T parameter Beta version is now available, with supplementary training completed. It can already quickly convert complex neuroscience papers into professional PowerPoint presentations, and an Office plugin is under development.

Grok 4.4 (early May) → The number of parameters will double to 1 trillion (1T), and Musk claims that coding capabilities, long-context processing, and overall performance will see significant improvements.

Grok 4.5 (late May) → The parameter count will further increase to 1.5 trillion (1.5T), moving closer to the precursor of Grok 5.

Within a month, from 0.5T to 1T and then to 1.5T — this three-stage leap in parameter scale has never been seen before in the history of AI development.

One trillion parameters is not the end; six trillion is.

But 1.5T is just a warm-up.

The real main event is Grok 5 — a behemoth with six trillion parameters, currently being trained on the Colossus 2 supercomputing cluster in Memphis.

How powerful is this cluster?

It comprises 550,000 NVIDIA GB200/GB300 GPUs, with a total power consumption of 2 gigawatts — enough to power a city with a population of 1.5 million.

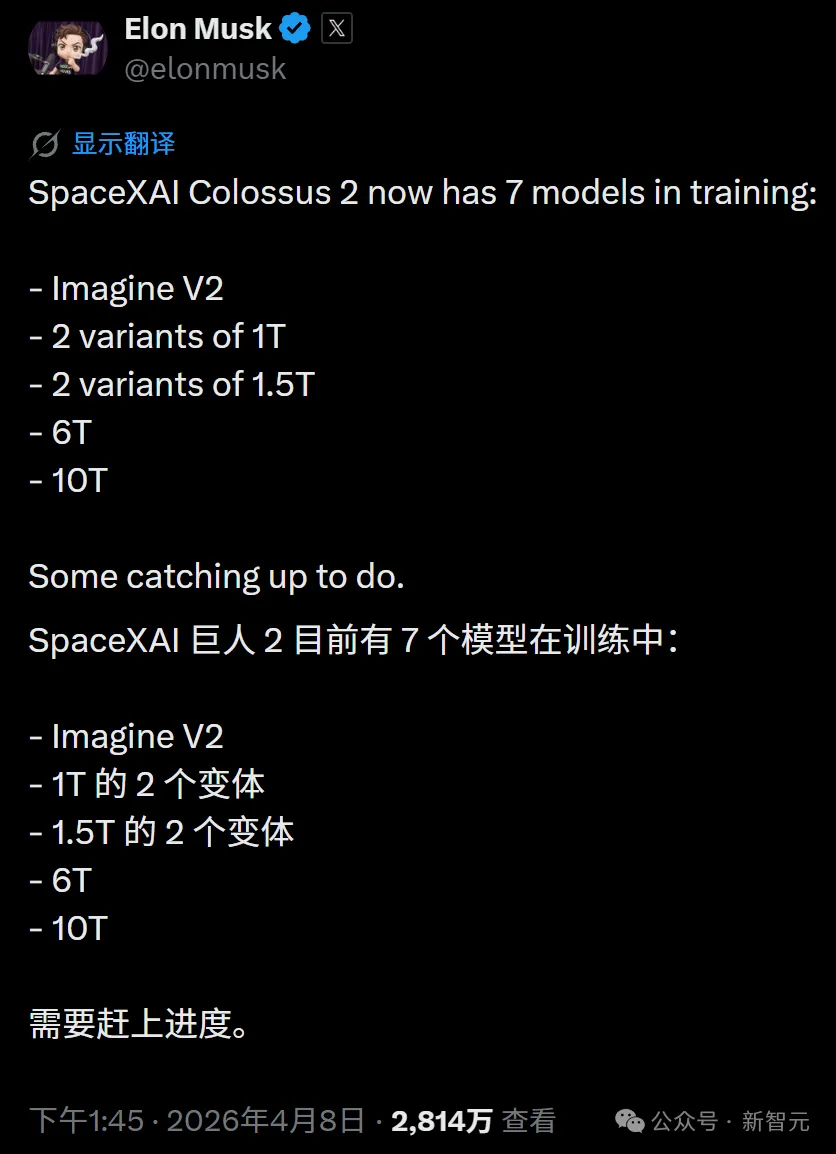

It has been revealed that Colossus 2 is simultaneously training seven models of varying scales, with parameter counts covering 1T, 1.5T, 6T, and even 10T.

In other words, Grok 4.4 and 4.5 are merely 'lightweight' products on this assembly line; the true centerpiece is Grok 5, with its 6T parameters, representing Musk’s ‘nuclear weapon.’

Musk previously stated at the Baron Capital investment conference that he believes the probability of Grok 5 achieving AGI is '10%, and steadily increasing.'

Now, he has gone so far as to declare outright that the answer to AGI is Grok 5.

Of course, one could say this is Musk's typical bombastic style.

But let’s not forget, when this man said he would land a rocket on an ocean barge, very few people in the world believed him at the time.

Can sheer parameter scaling really lead to AGI?

Objectively speaking, Musk's strategy embodies the classic 'scale-above-all' philosophy—bigger models, larger computing clusters, and more extensive training scales.

This approach has indeed driven exponential growth in AI capabilities over the past few years.

However, an increasing number of researchers have pointed out that there exists a fundamental gap between large language models and true general intelligence—a gap that cannot be bridged merely by stacking parameters.

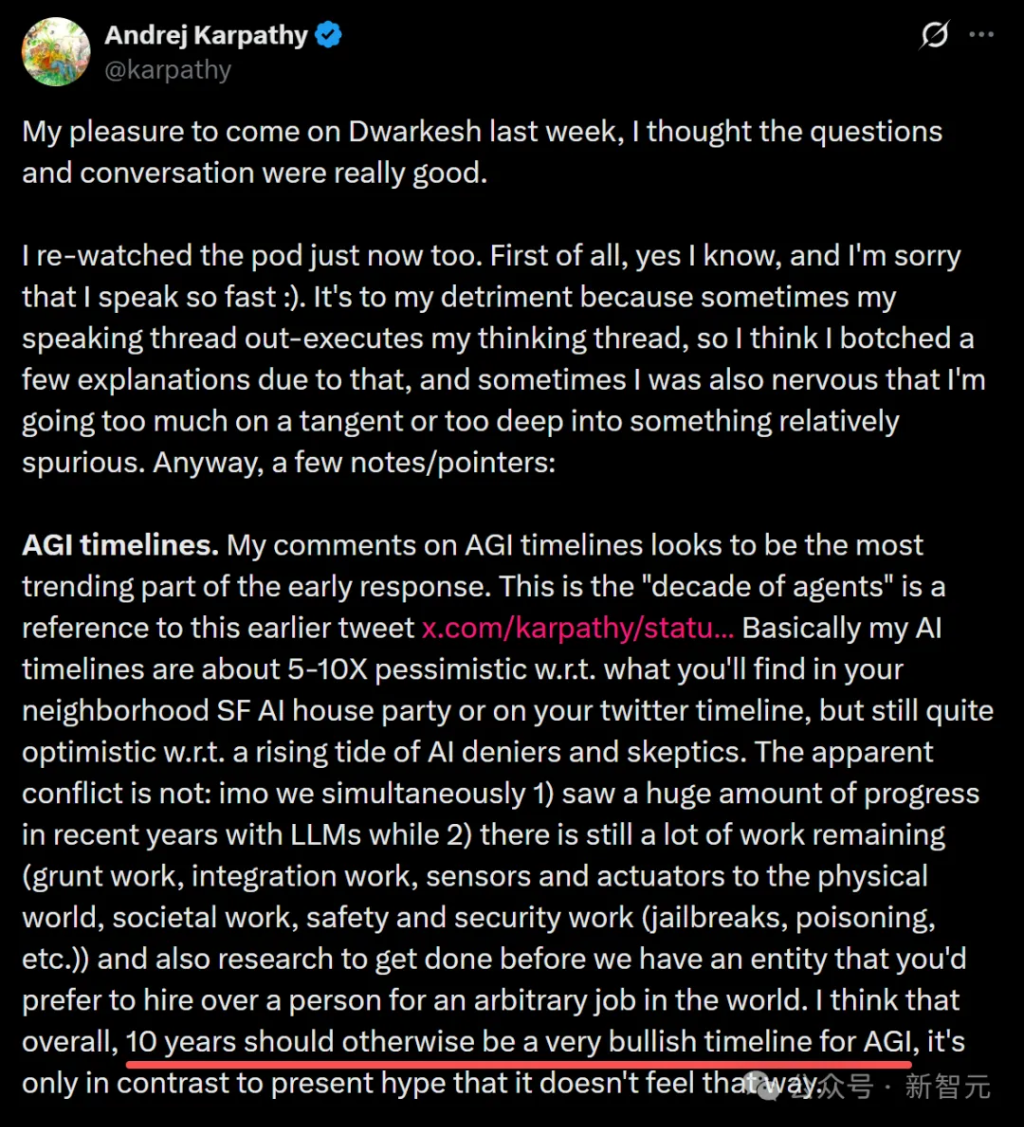

Andrej Karpathy, former Senior Director of AI at Tesla and co-founder of OpenAI, explicitly stated that AGI is still a decade away, far from the 'just around the corner' claims made by some industry leaders.

That said, xAI does hold a few unique cards that others don’t.

The real-time data stream from the X platform—68 million tweets per day—is a data source that OpenAI and Anthropic can only dream of.

The sensor data from Tesla's fleet of vehicles—millions of cars providing real-world driving scenarios—is a critical stepping stone toward embodied intelligence.

SpaceX's genetic makeup in engineering execution—building a gigawatt-scale supercomputing cluster in 122 days—is unimaginable for any other company in Silicon Valley.

More noteworthy is the evolutionary roadmap of xAI’s multi-agent architecture.

From Grok 4.20's four-agent collaboration to Grok 4.20 Heavy's 16-agent system, and then to Grok 5's anticipated dynamic agent generation and cross-domain specialization—this trajectory is more likely than mere parameter expansion to approach the boundaries of AGI.

Conclusion

Returning to Musk’s resounding statement—the answer to AGI is Grok 5.

Believe it or not, one fact is undeniable: we are in the wildest moment in the history of AI.

The four major laboratories have successively released trillion-parameter models within just a few months, while the open-source GLM-5.1 has already surpassed closed-source frontier models on certain benchmarks.

Anthropic even achieved a Verified SWE-bench score of 93.9% with Claude Mythos Preview—a figure that everyone considered impossible just six months ago.

In May, Musk’s Grok 4.4 and 4.5 will be released consecutively, while OpenAI is likely to counter with GPT-5.5, and Anthropic’s Opus 4.7 has already taken the lead in the programming domain.

Who will be the first to cross that vague yet real threshold of AGI?

Perhaps Musk is right, or perhaps he is merely creating a smokescreen.

But one thing is certain—on the road to AGI, everyone has already hit the accelerator to the fullest.

Each coming day could potentially mark the dividing line between 'before' and 'after.'

Editor /rice