The weight distribution across the value chain of AI computing power infrastructure is also beginning to shift. The next wave of excess alpha returns will no longer be confined to the strongest leading companies in the AI GPU/AI ASIC sectors but will systematically spread to encompass the entire stack of AI computing power infrastructure, including CPUs, memory, PCBs, liquid cooling systems, ABF substrates, and broad semiconductor foundries.

At the start of trading on Friday in the U.S. stock market, the two super giants of x86 architecture CPUs — $Intel (INTC.US)$ and $Advanced Micro Devices (AMD.US)$ — both reached new all-time highs. Intel, the long-established American chip giant that pioneered the x86 architecture, surged over 27% at one point, driven by exceptionally strong earnings that exceeded expectations.

AMD, another dominant player in the chip industry focused on high-performance x86 architecture CPUs for AI data center servers, also performed strongly. Its stock price surged over 14% at the opening, hitting a new all-time high.

Arm Holdings, the owner of the ARM instruction set architecture, $Arm Holdings (ARM.US)$ also saw its stock price soar to new historical highs at the opening. This underscores the significant investor preference for the ARM architecture's advantages in energy efficiency and low power consumption.

Arm Holdings, the owner of the ARM instruction set architecture, $Arm Holdings (ARM.US)$ also saw its stock price soar to new historical highs at the opening. This underscores the significant investor preference for the ARM architecture's advantages in energy efficiency and low power consumption.

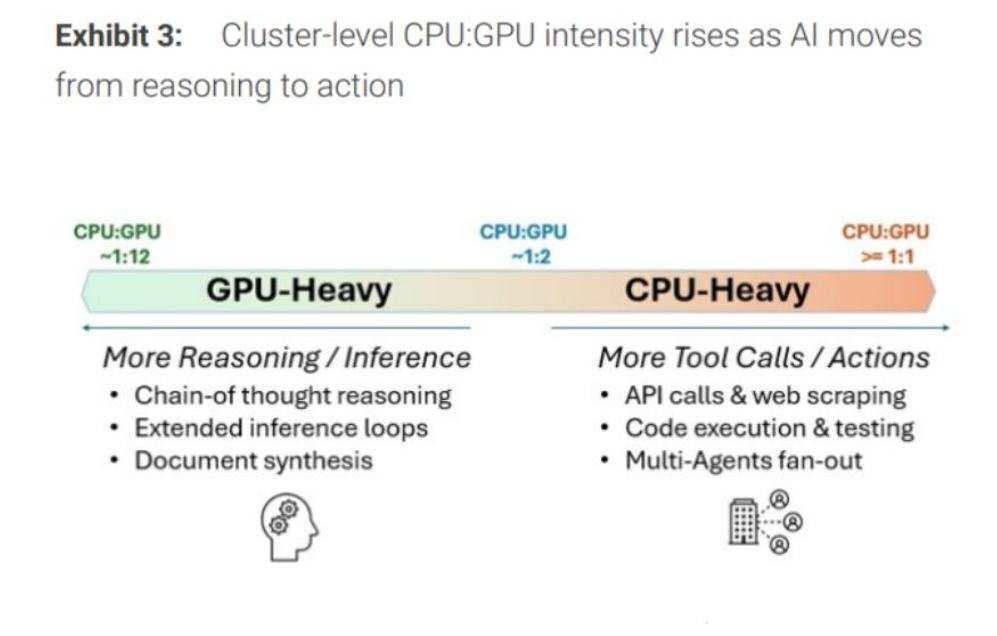

With Anthropic’s groundbreaking release of Claude Cowork and OpenClaw-like autonomous task-executing super AI agent tools expected to explode in popularity by 2026, this wave of AI agents (AI Agent) is sweeping across the globe rapidly. The bottleneck in AI computing architecture is shifting entirely from GPUs, which focus on matrix multiplication and throughput, to data center CPUs centered around control flow, task orchestration, and memory/IO coordination. High-performance CPUs for large-scale AI data centers are now facing severe supply shortages.

Over the past two years, the AI narrative has been almost monopolized by GPUs, with CPUs often playing a secondary role in the AI arms race. However, with the comprehensive growth of inference workloads, data orchestration, task scheduling, memory access, network communication, and multi-tool invocation driven by open-source, agent-based AI workflows like OpenClaw (i.e., AI Agents), the market has come to fully realize that without a powerful CPU as the system hub, GPU clusters cannot operate efficiently. This essentially marks the return of CPUs from 'undervalued infrastructure' back to the core stage of AI data centers, reflecting a clear 'Renaissance'-style retro trend.

In the era of AI agents, the computing power system is transitioning from simply stacking GPUs to more complex heterogeneous computing: CPUs must handle large-scale task scheduling, data movement, memory management, model invocation, toolchain orchestration, distribution of inference requests, database queries, network communication, and security isolation. In other words, CPUs are no longer just 'background components' in AI data centers but are re-emerging as the system hub and scheduling brain of AI factories. This aligns perfectly with the core imagery of a 'Renaissance': a traditional computing architecture once underestimated by the market and overshadowed by the halo of GPUs is regaining its value and capital market pricing power.

The vigorous construction of AI data centers has driven Intel's data center CPUs into a state of undersupply. Delivery times for some of Intel’s most in-demand high-performance server CPUs have extended to as long as six months. Prices for these high-performance, server-grade CPUs aimed at data centers have generally increased by 10% since the beginning of this year. This explains why Intel, a chip manufacturer whose stock had been sluggish for a year and a half, could surge over 120% this year and hit a new all-time high.

GPUs are no longer the sole dominators of computing power themes; the AI agent wave ignites CPUs.

Wall Street financial giants such as Morgan Stanley, Stifel, and DA Davidson believe that the two major PC and data center CPU leaders—Intel and Advanced Micro Devices—are in the most advantageous position to benefit from the record-level surge in demand for data center CPUs. Additionally, top analysts on Wall Street believe that memory chip giants will also benefit from the exponential expansion of CPU demand. Morgan Stanley believes that large U.S. memory manufacturers $Micron Technology (MU.US)$and$SanDisk (SNDK.US)$ are also in an optimal position.

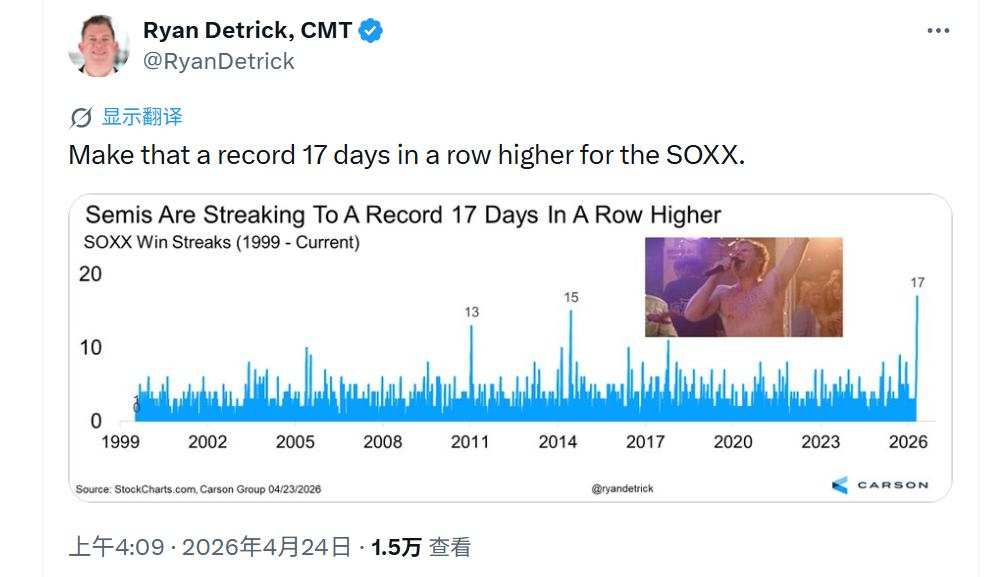

As South Korea’s stock market benchmark—Samsung ( $CSOP Samsung Electronics Daily (2x) Leveraged Product (07747.HK)$ ) and SK Hynix ($CSOP SK Hynix Daily (2x) Leveraged Product (07709.HK)$ ) hold significant weight in the $Korea Composite Index (.KOSPI.KR)$ , which has reached new highs under the pressure of deteriorating geopolitical tensions, and Taiwan Semiconductor, a heavyweight stock known as the 'King of Chip Foundries' and one of the biggest winners of the AI boom, has driven Taiwan's stock market to new highs. Additionally, the record-breaking 17 consecutive gains of the stock known as the 'bellwether of chip stocks' have further convinced investors that the 'AI computing power investment theme' can dominate over all market noise. $Taiwan Semiconductor (TSM.US)$ With the momentum provided by the stock referred to as the 'indicator of chip stocks' $PHLX Semiconductor Index (.SOX.US)$ achieving a record-breaking 17 consecutive sessions of gains, investors are increasingly confident that the 'AI computing power investment theme' will prevail over all market noise.

At the same time, the weight distribution across the value chain of AI computing power infrastructure is also beginning to shift. The next wave of excess alpha returns will no longer be exclusive to the strongest leaders in the AI GPU/AI ASIC domain but will systematically spread to encompass CPUs, memory, PCBs, liquid cooling systems, ABF substrates, and a broad spectrum of semiconductor foundry services within the full-stack AI computing power infrastructure layer. In this narrative transition, Wall Street financial giants such as Morgan Stanley believe that CPUs and DRAM/NAND memory chips for data centers are likely to emerge as the most significantly benefited subcategories of AI computing power.

Within the agent workflow, a substantial portion of workloads is not only consumed by token generation on GPUs but also expended on CPU-dominant processes such as Python interpretation and execution, web scraping, database querying, RAG index access, lexical processing, task queue scheduling, RPC/IPC communication, and KV state updates. This implies that user experience is increasingly determined not by the peak computing power of a single GPU but by whether the CPU has sufficient core count, thread concurrency, cache hierarchy, memory bandwidth, and PCIe/CXL/interconnect scheduling capabilities to support high-frequency tool invocation and high-density task switching. If there are deficiencies in CPU cores, memory subsystems, or I/O scheduling, even an abundance of nominal GPU computing power may collapse in utilization due to delays in data preparation, task coordination, and system wait times.

Therefore, there is no doubt that the bottleneck of AI computing architecture is shifting fundamentally from GPUs, which focus on matrix multiplication and addition throughput, to data center CPUs that emphasize control flow, task orchestration, and memory/IO coordination. This shift stems from a fundamental migration in workload paradigms. CPUs are no longer merely general-purpose computing chips; they have become the control plane processors, system orchestration engines, and resource scheduling hubs of the intelligent agent era. The assertion that 'the underestimated CPU is becoming the new AI bottleneck' is not an emotional judgment but an inevitable outcome as AI workloads evolve from 'inference computation problems' to 'complex systems engineering challenges.'

In the early stages of large model inference, the predominant paradigm was 'single request—single generation,' with CPUs primarily handling data movement, request routing, and basic scheduling, serving as a typical auxiliary control plane. However, with the advent of AI agents and reinforcement learning, system loads have transitioned from simple forward inference to complex closed-loop processes involving task planning, tool invocation, sub-agent collaboration, environmental interaction, state management, and result verification. This 'orchestration layer' consists of CPU-intensive tasks characterized by strong control flow, heavy branch judgments, frequent system calls, and intensive memory access, which cannot be efficiently replaced by GPUs. Consequently, CPUs are transitioning from their previous role as 'supporting actors' to becoming the new bottleneck determining system throughput, latency, and resource utilization.

The latest forecast data from Morgan Stanley indicates that the explosive growth of intelligent agents marks a structural shift from computation to orchestration, projecting an incremental CPU market space of $32.5 billion to $60 billion by 2030. This will expand the total addressable market (TAM) for server-grade CPUs to between $82.5 billion and $110 billion. A TrendForce report predicts that in the era of AI agents, the CPU:GPU ratio may be significantly reassessed, shifting from the traditional 1:4 to 1:8 in AI data centers to a range of 1:1 to 1:2.

Wall Street loudly proclaims that the uptrends for Advanced Micro Devices and Arm Holdings are far from over.

As of this writing, Intel's stock price hovers near $85, with intraday gains exceeding 27%, surpassing the optimistic target prices of most Wall Street analysts. However, Advanced Micro Devices and Arm Holdings remain some distance from their highest Wall Street target prices.

In a recent investor report, Morgan Stanley analysts led by senior Wall Street strategist Joseph Moore stated, 'The obvious beneficiaries of the strengthening CPU market—Intel and Advanced Micro Devices—operate within a somewhat complex strategic framework. Nevertheless, the exponential expansion of server CPU demand is crucial for the earnings outlook of both companies.'

Between the two, we have a stronger preference for Advanced Micro Devices; additionally, at this current juncture, we believe memory chip manufacturers exhibit a significantly more favorable risk-reward ratio, with the memory theme being one of the direct beneficiaries of the expansion in CPU demand." Analysts at Morgan Stanley, led by Joseph Moore, stated.

The D.A. Davidson team, led by veteran Wall Street analyst Gil Luria, chose to upgrade Advanced Micro Devices' (AMD.US) stock rating before the U.S. market opened on Friday following Intel's robust earnings report. They also significantly raised their 12-month target price to $375, the highest on Wall Street.

'We are upgrading AMD's stock rating from Neutral to Buy and raising our target price from $220 to $375, based on structural growth in CPU demand and significantly improved visibility into AMD's role in the great wave of data center construction. Given the extent to which Intel exceeded expectations, we believe AMD's earnings estimates have substantial upside potential, which will begin to reflect in AMD's March quarter results, scheduled for release on May 5,' said the D.A. Davidson team led by Gil Luria.

'We view Intel's performance as a prelude to a significant leap in AMD's CPU business and believe that the structural shift toward agent-based AI workloads is creating unprecedented demand for server CPUs. Given our assessment that demand will outstrip supply in the foreseeable future, AMD is well-positioned to implement substantial price increases across its product portfolio to support and expand margins,' added the D.A. Davidson team led by Gil Luria.

The core bullish thesis on ARM on Wall Street has now shifted from being a 'smartphone IP licensing company' to becoming one of the key beneficiaries of the supercycle in AI data center CPUs and Agentic AI infrastructure. In terms of price targets, Guggenheim, a well-known Wall Street investment firm, recently raised ARM's target price to the highest on Wall Street at $240. The rationale is that ARM is transitioning from a traditional provider of IP for smartphones and lightweight consumer electronics to a direct participant in AI data center silicon and supercomputing platforms.

According to reports, a recent announcement released on Friday revealed that $Amazon (AMZN.US)$ Amazon, the US cloud computing and e-commerce giant, $Meta Platforms (META.US)$ , has entered into a multi-billion-dollar long-term agreement with Meta Platforms, the parent company of Facebook. Under this deal, the social media giant will lease hundreds of thousands of Amazon’s self-developed ARM-based general-purpose data center server CPU chips for its large-scale new AI data centers to meet the massive artificial intelligence inference workloads required by Facebook and Instagram users.

Graviton, the ARM-based general-purpose server CPU developed by AWS, Amazon’s cloud computing division, primarily handles general computing, scheduling, data preprocessing/postprocessing, service orchestration, as well as some AI inference-related scheduling and coordination tasks within AI data centers.

For a company like Meta, which processes massive volumes of AI agents, recommendations, advertisements, content generation, and query responses daily, many tasks do not require the full involvement of expensive GPUs. Extensive use of high-density ARM-based CPUs like Graviton, rather than Intel x86 architecture CPUs, to handle peripheral inference service loads can reduce per-request costs, free up GPUs for higher-value training and inference tasks, and improve overall cluster TCO. Arm Holdings also emphasized that the expansion of AI data centers is making low-power, high-efficiency ARM-based CPUs critical for orchestration, data processing, and system control. The increase in core counts to 192 cores in AWS’s fifth-generation Graviton reflects this growing demand for higher CPU density.

Arm is one of the biggest winners of the global AI boom. NVIDIA's self-developed Grace CPU is based on ARM architecture, Amazon's self-developed Graviton server processors also use ARM architecture, as do Google’s Axion Processors, its first-generation self-developed ARM-based data center CPU built on ARM Neoverse, and Microsoft Azure’s Cobalt 100 self-developed ARM-based data center CPU. The ARM architecture is evolving from being the “King of Smartphones” into one of the foundational computing infrastructures of the AI cloud era.

ARM’s adoption of a reduced instruction set computing architecture gives its server CPUs a significant advantage in energy efficiency and low power consumption when executing AI inference/training tasks compared to Intel’s x86 architecture. This feature makes the ARM architecture particularly suitable for data center servers, enabling it to efficiently complement AI GPUs to meet the seemingly insatiable demand for AI inference/training computing power.

Editor/KOKO